Control Methods for Stable Interaction

Stable interaction with high stiffness virtual environments (VEs) still remains a challenging issue for kinesthetic haptic devices. In particular, it has been recognized that the maximum achievable impedance with the traditional digital control loop is limited by the lack of information to the controller caused by time discretization, time-delay, position quantization, related to the use of encoder as a position sensor, and zero-order hold (ZOH) of force command during each servo cycle. These lead to energy leak and eventually instability if not dissipated through the intrinsic friction of the device, controller, or damping from the user’s grasp.

Our lab has proposed several approaches guaranteeing stability, well received in the research community, including, but not limited to, time-domain passivity approach (TDPA), input-to-state stable (ISS) approach, successive force augment (SFA) approach, successive stiffness increment (SSI) approach, and unidirectional virtual inertia approach. As an ongoing research, we are trying to maximize the achievable impedance range of haptic and telerobotic systems towards highly transparent haptic interaction.

Researchers: Hyeon-Seok Choi, Huseyin, Seong-Su Park, Nam-Gyun Kim

Selected Publications

• (TDPA) – Jee-Hwan Ryu, Jordi Artigas, Carsten Preusche “A passive bilateral control scheme for a teleoperator with time-varying communication delay”, Mechatronics, IEEE/ASME Transactions on, 20.7 (2010): 812-823. [Link]

• (ISS) – Jafari, Aghil, Muhammad Nabeel, and Jee-Hwan Ryu. “The input-to-state stable (ISS) approach for stabilizing haptic interaction with virtual environments.” IEEE Transactions on Robotics 33.4 (2017): 948-963. [Link]

• (SFA) – Singh, Harsimran, et al. “Enhancing the rate-hardness of haptic interaction: successive force augmentation approach.” IEEE Transactions on Industrial Electronics 67.1 (2019): 809-819. [Link]

• (SSI) – Singh, Harsimran, Aghil Jafari, and Jee-Hwan Ryu. “Multi degree-of-freedom successive stiffness increment approach for high stiffness haptic interaction.” International AsiaHaptics conference. Springer, Singapore, 2016. [Link]

• (UVI) – Choi, Hyeonseok, et al. “Virtual Inertia as an Energy Dissipation Element for Haptic Interfaces.” IEEE Robotics and Automation Letters 7.2 (2022): 2708-2715. [Link]

Interactive Autonomy for Shared Teleoperation

Teleoperation may enjoy different levels of autonomy supporting the human during a task execution. Virtual fixtures correspond to one of the lowest levels of autonomy, where human receives assistance with orienting the tool, to follow a path, or to avoid dangerous regions in the workspace. However, it is difficult to generate virtual fixtures in unstructured environments. Our lab works on interactive and intuitive methods for virtual fixture generation applicable in a wide range of teleoperation scenarios. In addition, we study methods for efficient use of various types of virtual fixtures (e.g., remote virtual fixture, local virtual fixture, etc.) and rendering methods depending on various teleoperation situations such as time delays and types of tasks.

Another way of teleoperating is to use almost full autonomy, where the human helps computer to solve complicated tasks, such as motion planning. Our lab has introduced an approach to record human intuition through a haptic device in order to reduce the complexity of path planning algorithm for cluttered environments. At IRiS lab we are exploring various levels of autonomy and human-robot interaction techniques to improve teleoperation.

Researchers: Kwang-Hyun Lee, Dong-Hyeon Kim

Selected Publications

• Pruks, Vitalii, and Jee-Hwan Ryu. “Method for generating real-time interactive virtual fixture for shared teleoperation in unknown environments.” The International Journal of Robotics Research (2022): 02783649221102980.

• Lee, Kwang-Hyun, Vitalii Pruks, and Jee-Hwan Ryu. “Development of shared autonomy and virtual guidance generation system for human interactive teleoperation.” 2017 14th International Conference on Ubiquitous Robots and Ambient Intelligence (URAI). IEEE, 2017.

• Lee, Kwang-Hyun, Usman Mehmood, and Jee-Hwan Ryu. “Development of the human interactive autonomy for the shared teleoperation of mobile robots.” 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2016.

Skill Transfer Through Teleoperation

Direct teleoperation is useful, and there are many potential applications. However, it requires a heavy workload from the human operator, especially for tasks that span over longer durations or repetitive tasks. Autonomy methods can deal with this problem. However, it is extremely challenging to program the human’s task conduction skills which require sensitive force interaction. To deal with this problem, the concept of Learning from demonstrations (LfD) (aka Programming by Demonstrations (PbD)) has been proposed. However, the conventional LfD approach is not able to directly be utilized the teleoperation applications because human has to be the same place where the robot is to physically guide the robot (it is called kinesthetic teaching). Our ongoing research in this area involves employing LfD through teleoperation methods for a human expert skill transfer through teleoperation while ensuring task success and minimal human workload.

Researchers: Joong-Ku Lee, Kwang-Hyun Lee

Selected Publications

• Lee, Joong-Ku, and Jee-Hwan Ryu. “Learning Robotic Rotational Manipulation Skill from Bilateral Teleoperation.” 2022 19th International Conference on Ubiquitous Robots (UR). IEEE, 2022.

• Latifee, Hiba, et al. “Mini-batched online incremental learning through supervisory teleoperation with kinesthetic coupling.” 2020 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2020.

Haptic Device for Intuitive Teleoperation

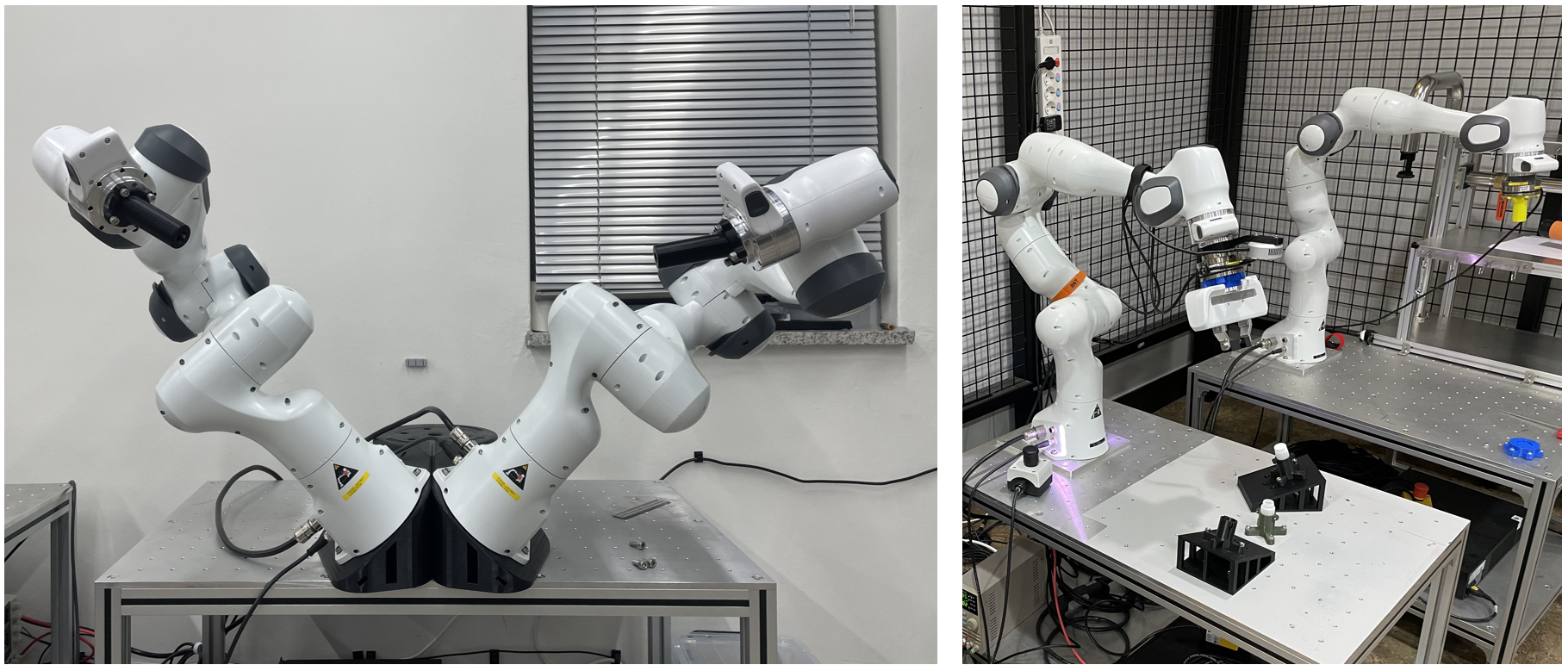

Lack of situational awareness and hand-eye coordination mismatch make teleoperation a challenge. Numerous research efforts have been made to resolve these issues. However, most of the previous research used conventional general master interfaces, such as Phantom, Omega, or Virtuose, which have limited capabilities of increasing intuitiveness. In IRiS Lab, we have been developing new types of task- or remote robot-specific haptic human interfaces to further enhance the intuitiveness with improved situational awareness and intrinsic hand-eye coordination matching. As an initial trial of this concept, we are developing a multi DoFs manipulator-based haptic device for intuitive bilateral teleoperation tasks and modulized 3 DoFs torque feedback devices which can be easily attached to the tip of the commercial haptic devices.

Researchers: Joong-Ku Lee, Huseyin

Equipments

Franka Emika, Panda (4ea)

-

- 7-DOF Manipulator

- Remote Robot

- Haptic Device

Universal Robot, UR5

-

- 6-DOF Manipulator

- Remote Robot

Force Dimension, Omega. 7

-

- Parallel type haptic device

- 6DOF input

- 4DOF output

Sensable, Phantom Premium 1.5

-

- Serial type haptic device

- 6DOF input

- 6DOF output

Clearpath, Husky A200

-

- Mobile robot

Hyundai, i30

-

- Teledriving

ATI, Axia80 (4ea)

-

- 6-DOF force/torque measurement

- Resolution: 1/25N, 1/500Nm